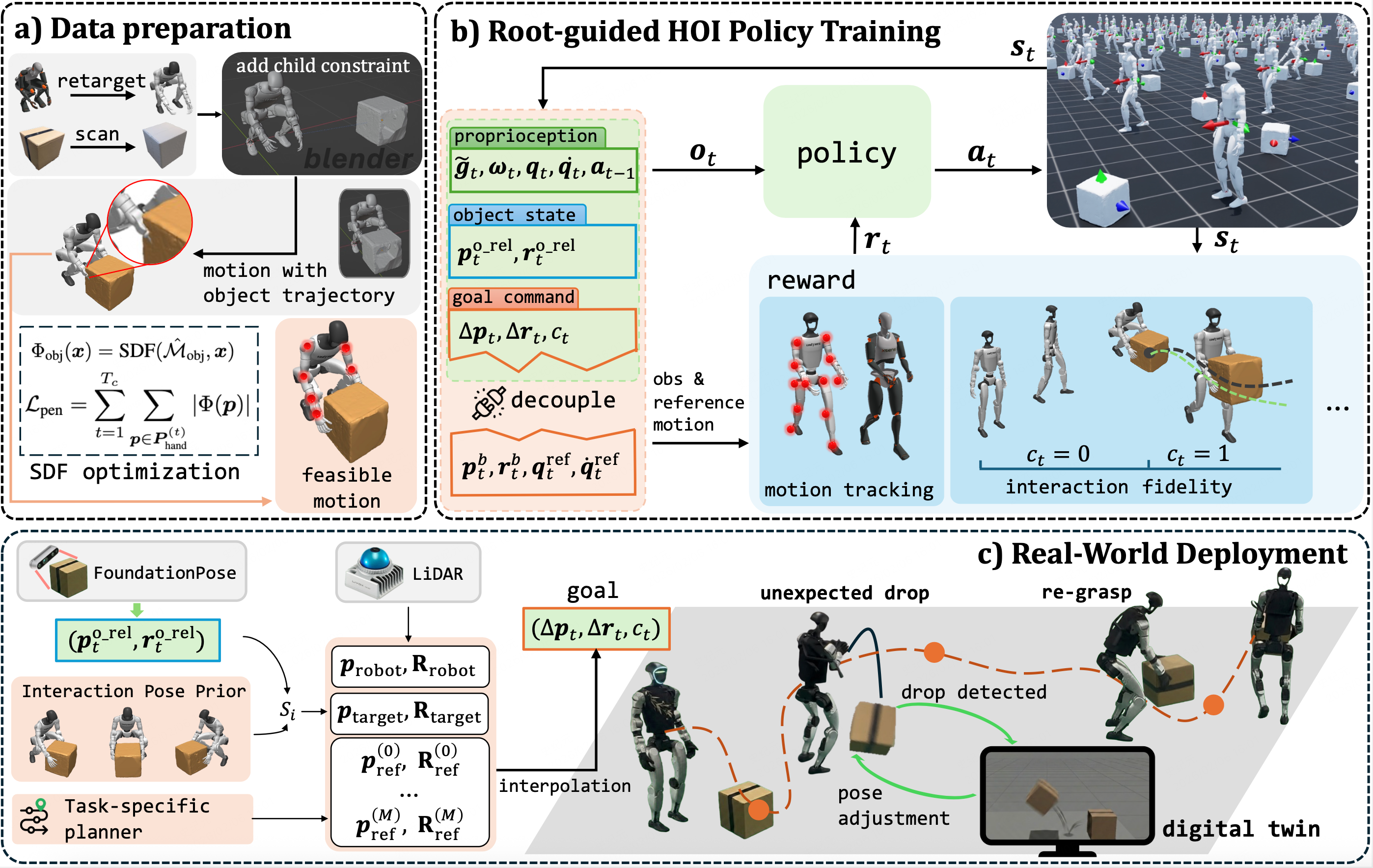

Executing reliable Humanoid-Object Interaction (HOI) tasks for humanoid robots is hindered by the lack of generalized control interfaces and robust closed-loop perception mechanisms. In this work, we introduce Perceptive Root-guided Humanoid-Object Interaction, Pro-HOI, a generalizable frame- work for robust humanoid loco-manipulation. First, we collect box-carrying motions that are suitable for real-world deployment and optimize penetration artifacts through a Signed Distance Field loss. Second, we propose a novel training framework that conditions the policy on a desired root-trajectory while utilizing reference motion exclusively as a reward. This design not only eliminates the need for intricate reward tuning but also establishes root trajectory as a universal interface for high-level planners, enabling simultaneous navigation and loco- manipulation. Furthermore, to ensure operational reliability, we incorporate a persistent object estimation module. By fusing real- time detection with Digital Twin, this module allows the robot to autonomously detect slippage and trigger re-grasping maneuvers. Empirical validation on a Unitree G1 robot demonstrates that Pro-HOI significantly outperforms baselines in generalization and robustness, achieving reliable long-horizon execution in complex real-world scenarios.

Overview of Pro-HOI: a) Data Preparation: We frist collect human motion clips using the mocap system, and augment them with object geometries in Blender. Then we use SDF-based optimization to generate physically feasible reference motions. b) Root-Guided Policy Learning: The RL policy is trained to perform whole-body interaction skills conditioned on the desired root trajectory, while utilizing the reference motion as rewards. c) Real-world Deployment: We integrate FoundationPose for 6D object pose estimation and FAST-LIO2 for root pose estimation, combined with the Interaction Pose Prior and a task specific planner to generate target root trajectories. The full stack executes entirely onboard a Jetson NX with a D435i camera and a Mid-360 LiDAR, achieving robust sim-to-real transfer.

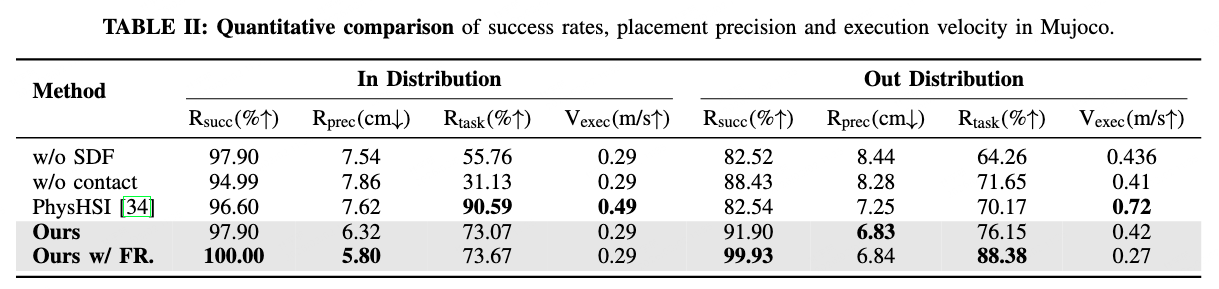

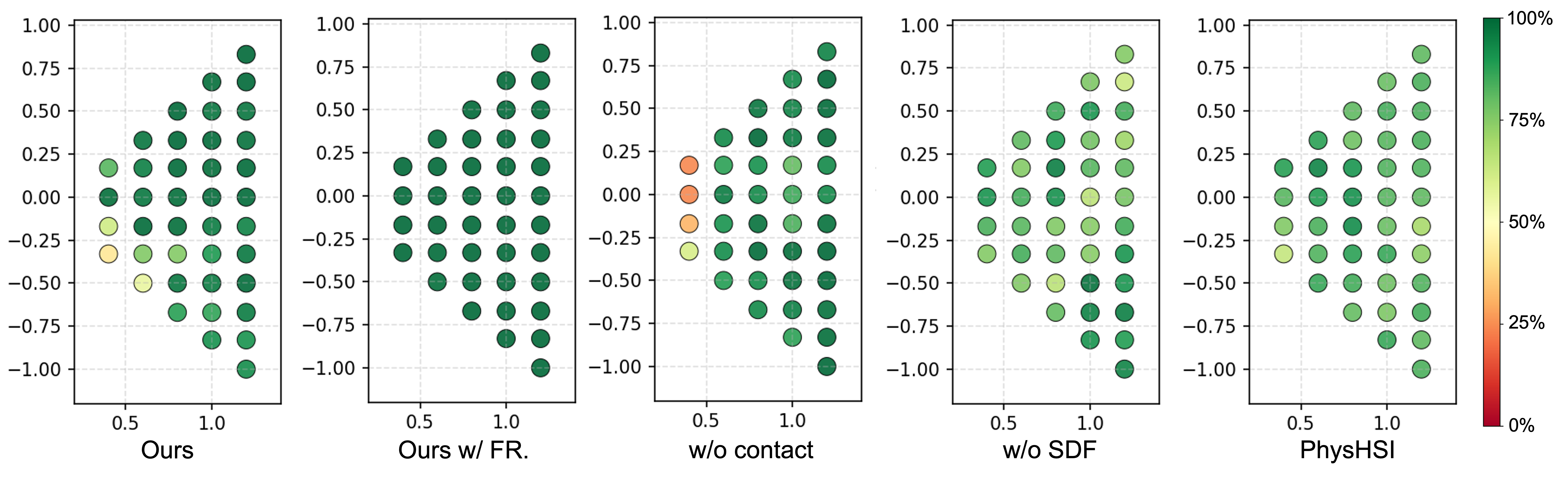

The ID set utilizes the exact robot and object configurations from the training distribution. The OOD set is designed to test the policy by random sampling of the initial object position and the target placement position in the workspace. Specifically, we created a grid with a spacing of $0.2$m within a trapezoidal region defined by $x \in [0.4, 1.4]$ m and $y \in [-x, x]$ m. For each grid point, we evaluated object yaw orientations from $\{0^\circ, 15^\circ, 30^\circ, 45^\circ\}$. Furthermore, target placement locations were sampled along a circle with a radius of $4$m centered on the robot at $10^\circ$ intervals. This extensive evaluation protocol yields a total of $5,756$ distinct task scenarios.

Spatial distribution of grasp success rates across the OOD evaluation set. The color scale indicates the success rate, where darker green denotes higher stability and red/yellow indicates failure.

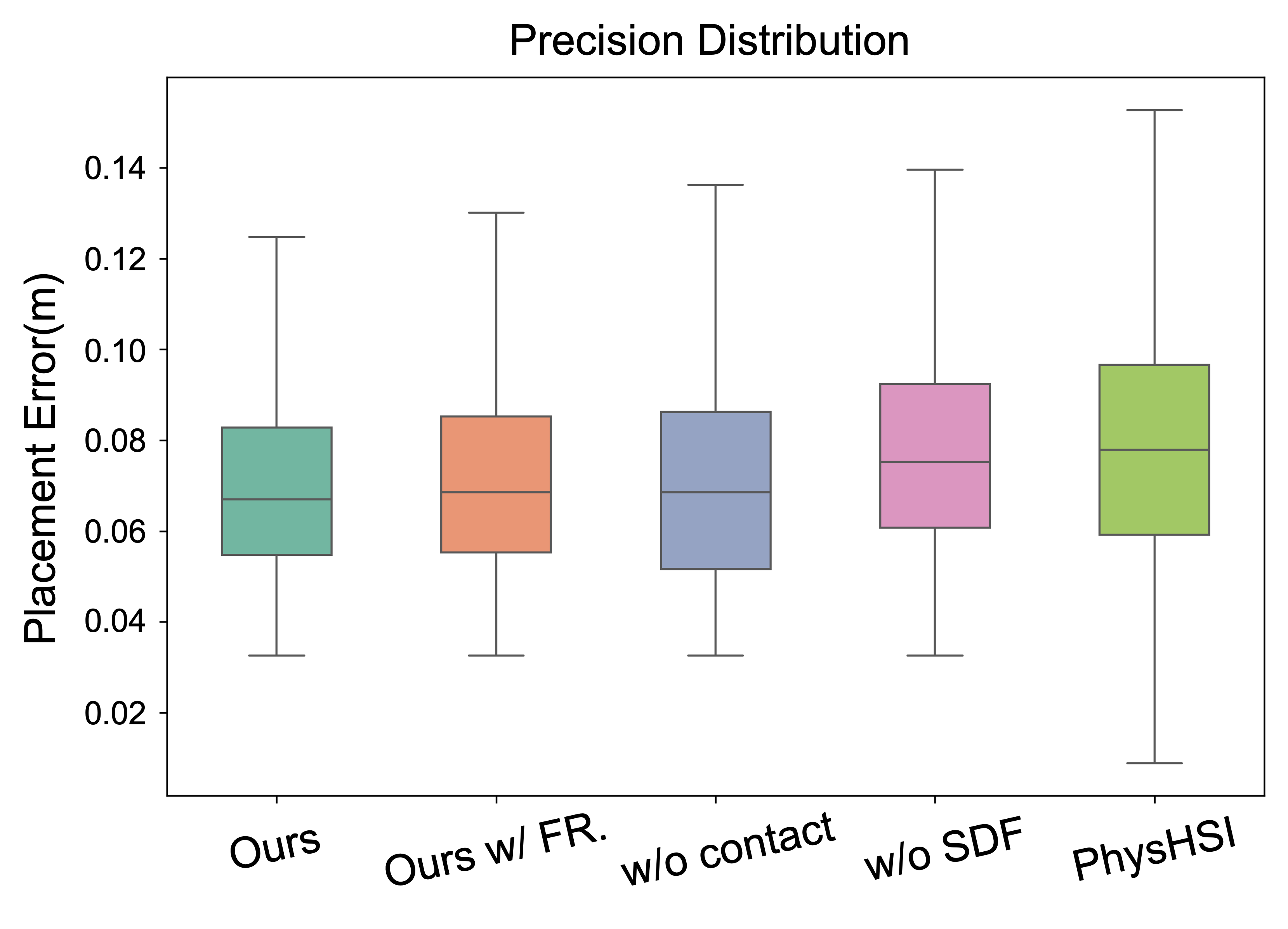

Statistical distribution of placement precision across different methods.

Deployed on Unitree G1 Humanoid - Onboard Computing

@article{lin2026prohoi,

title = {Pro-HOI: Perceptive Root-guided Humanoid-Object Interaction},

author = {Lin, Yuhang and Shi, Jiyuan and Wang, Dewei and Kong, Jipeng and Liu, Yong and Bai, Chenjia and Li, Xuelong},

journal = {arXiv preprint arXiv:2603.01126},

year = {2026}

}